Aside from Android and its Pixel brand, Google has put a lot of effort into improving its software services. Following the reveal of Bard AI, the software giant has announced some big changes to several of its software products, during its “Live from Paris” event. More specifically, big changes and new features will be coming to Google Maps, Search, Lens, and Translate – let’s take a look!

Google Maps

First up is “Immersive View,” which starts rolling out to Google Maps. The new feature will primarily be available in five areas including London, Los Angeles, New York, San Francisco, and Tokyo, with planned launches to more cities including Venice, Florence, Amsterdam, and Dublin.

Google also announced that it will be expanding its Live View feature to more European regions in the coming months, which will include Barcelona, Madrid and Dublin. Meanwhile, its Indoor Live View will soon be available to over a thousand urban locations worldwide, which will include airports and shopping malls in cities including London, Paris, Berlin, Madrid, Barcelona, Prague, Frankfurt, Tokyo, Sydney, Melbourne, São Paulo, Singapore, and Taipei, to name a few.

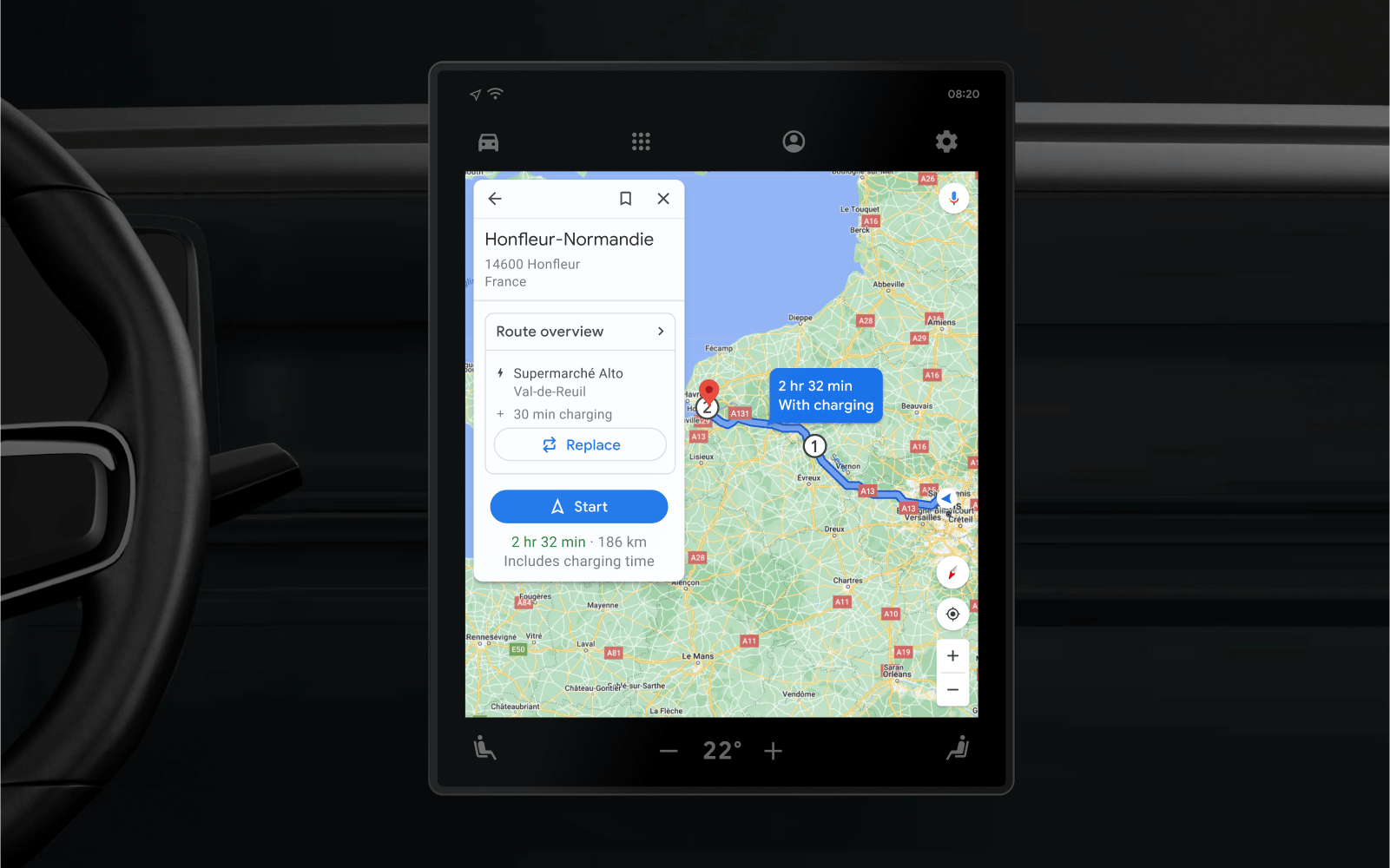

Users will soon be able to use Glanceable directions on Android and iOS devices, which will allow users to track journeys via a route overview or lock screen, which also display updated ETAs and reminders of when to turn during navigation. EVs will likewise soon get new Maps features, including navigation towards charging stations with 150 Kw chargers, charging stops during shorter trips, with computations best on factors like traffic, remaining battery, and predicted energy consumption.

Google Search and Lens

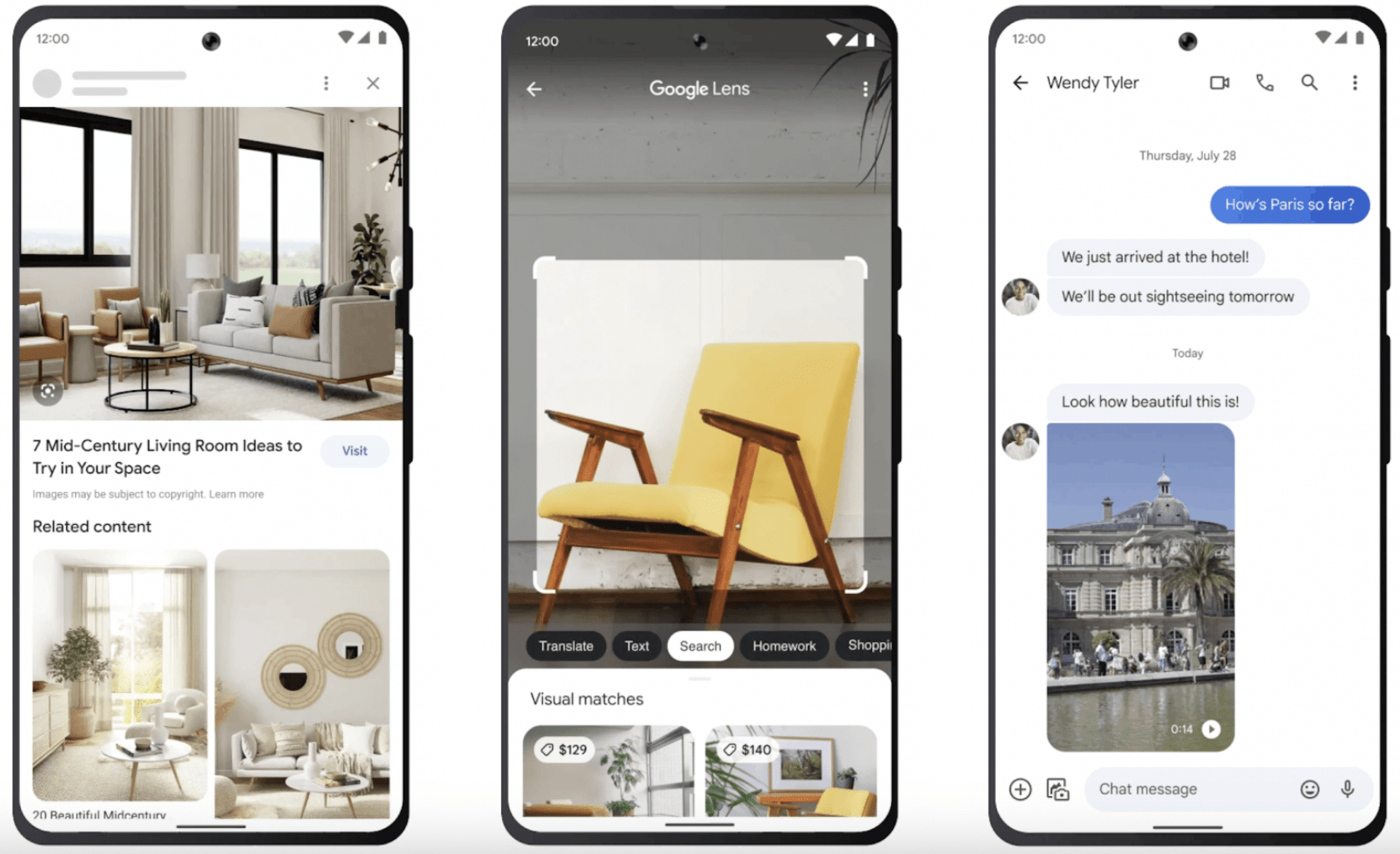

Google has also announced that Multisearch is officially live globally on mobile, in all languages and countries where Lens is available. Multisearch lets users will also be able to to search using text and images at the same time – this feature is accessible in the Google app, via taking a photo or screenshot and running it through Google Lens.

For US-based users, Google has also added “multisearch near me,” allowing users can to snap a picture or take a screenshot of a dish or item, then find it nearby instantly. The feature will also make its way to more regions in the coming months. Soon, users will also be able to use Lens to use the “search your screen” feature through Assistant on Android, which lets users search what they see in photos or videos within apps, without leaving the actual app itself.

Google Translate

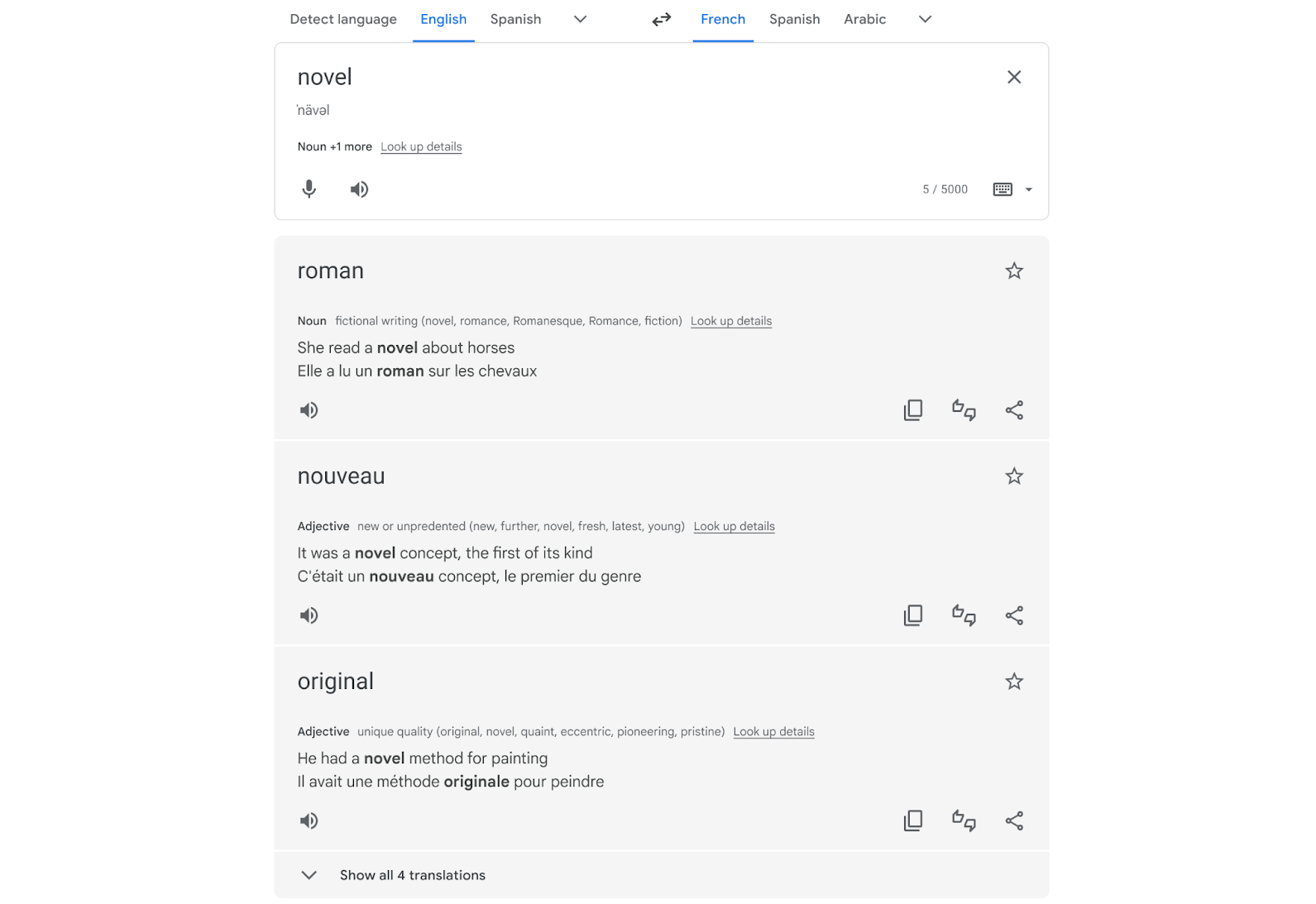

As for Translate, Google will also provide richer, more contextual translations for single words, short phrases, and phrases that convey multiple meanings, helping users better translate words depending on context. This feature will soon be available in English, French, German, Japanese, and Spanish, with more languages to follow over the course of the next months.

Google has also redesigned the Translate app on Android with iOS soon to follow, which comes with a more glanceable screen, accessible features and new gestures for maneuvering your frequent and recent translations. Translation features will also be integrated into Lens, with AR translate features, which will globally be available now.

Comments