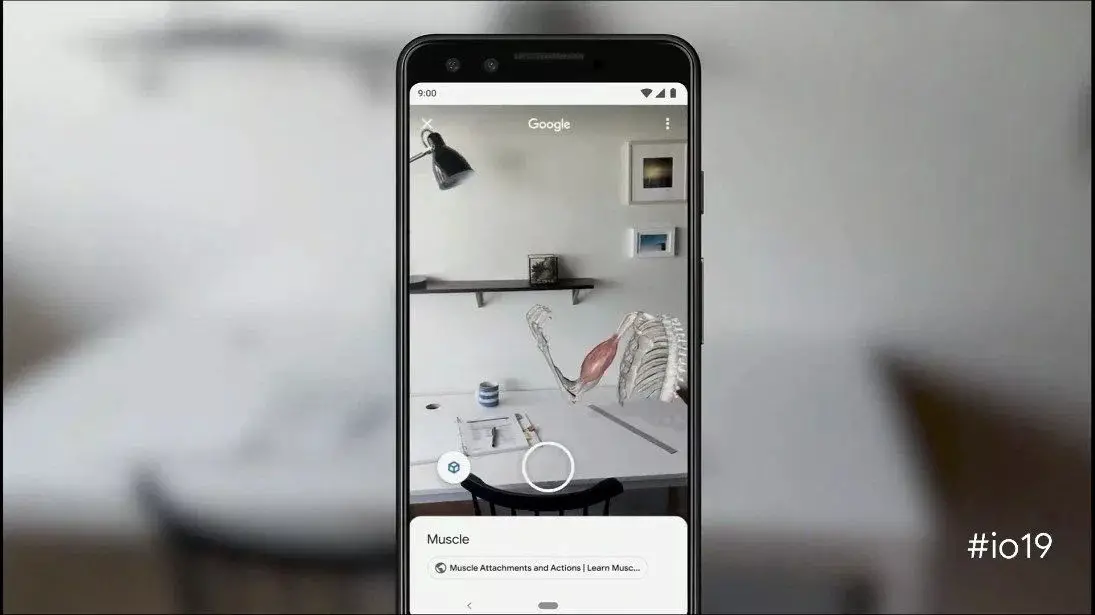

It’s easy to search on Google. You enter the keyword or phrase and Google will pull up links to websites that could have what you are looking for, but sometimes it’s hard to know what it is exactly you’re trying to find, and words might not be enough. This is why Google has announced a new feature called “multisearch”.

According to Google’s description of the feature:

“At Google, we’re always dreaming up new ways to help you uncover the information you’re looking for — no matter how tricky it might be to express what you need. That’s why today, we’re introducing an entirely new way to search: using text and images at the same time. With multisearch in Lens, you can go beyond the search box and ask questions about what you see.”

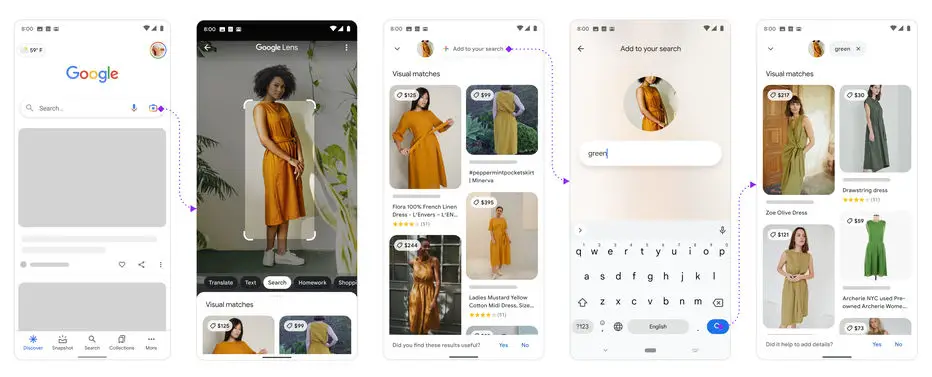

So basically how this works is that if you see something you like online but want to find something similar in a different context, what you can do is take a screenshot of the object and run it through Google Lens, and then using words, you can add more context to it and based on the information you provide, Google will find something for you.

Some examples that Google has given is where you find a piece of clothing you like and you want that exact style but maybe in a different color, so by combining the image and the color you want it in, Google will attempt to find something closer to what you want.

Some examples that Google has given is where you find a piece of clothing you like and you want that exact style but maybe in a different color, so by combining the image and the color you want it in, Google will attempt to find something closer to what you want.

This can be handy because sometimes there are certain objects or items that you don’t really know the name of, so combining images and words would be akin to you walking into a store with an object in your hand and asking the shopkeeper if they have it in another style or different color.

Multisearch is currently only available as a beta feature in English in the US via the Google app, but presumably it should eventually roll out to more users in the future.

Source: Google

Comments